An Authenticity Layer for AI-Generated Content Detection in Human Review Workflows.

Humanly is an authenticity intelligence platform designed to help organisations detect AI-generated or AI-manipulated content within human-submitted claims, applications and evidence. It does not replace human decision-making. Instead, it introduces a dedicated authenticity layer into existing review workflows, giving reviewers structured signals that can help them assess whether content is likely to be synthetic, manipulated or authentic.

As generative AI tools become more accessible, the skill required for fraud dilutes, and many organisations are seeing increased complexity in workflows that rely on user-submitted documents, images or digital media.

Manual review alone is no longer possible. Humanly is designed to support reviewers by analysing authenticity signals and surfacing risk indicators that may warrant closer human scrutiny.

For enterprise teams managing fraud exposure, claims operations or trust and safety workflows, the question is no longer whether Generative AI has been used to manipulate truth. The operational question is how to introduce structured authenticity analysis without removing human judgment. That is the ethos of the Humanly platform.

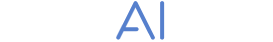

AI-Generated Content Detection Across Submission Types

Humanly analyses user-submitted content for signals that may be associated with AI generation or manipulation. Depending on configuration and workflow context, this can include written statements, structured forms or supporting documentation.

The platform is designed to:

- Analyse a range of authenticity signals within submitted content

- Surface risk indicators where patterns align with AI-generated characteristics

- Provide structured outputs that can be reviewed alongside the original submission

Humanly does not make final decisions on claims or applications. It provides additional perspective that can help reviewers prioritise cases and apply judgment more consistently.

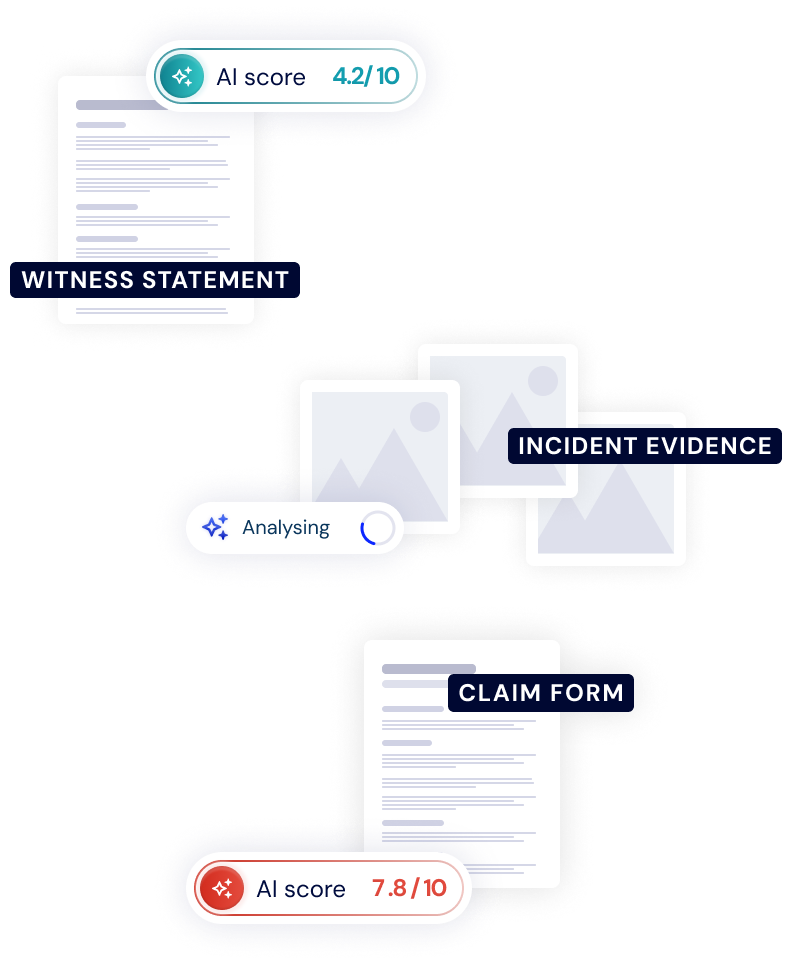

Explainable AI Perspective

Manual review can be highly skilled but operationally demanding. When reviewers are asked to assess large volumes of content, consistency can become difficult to maintain.

Humanly is designed to generate structured authenticity signals that may help:

- Standardise how AI-manipulation risk is assessed

- Reduce reliance on purely subjective pattern recognition

- Support more consistent review documentation

Outputs can be integrated into case management systems, enabling reviewers to see authenticity indicators directly within their existing workflow.

This approach strengthens decision integrity without attempting to automate judgment.

Built for SME’s and Large integrators

For teams that require direct access to authenticity insights, Humanly provides an interface designed for operational clarity.

Key design principles include:

- Clear visibility of authenticity indicators

- Contextual display of analysed content

- Audit-friendly presentation of signals

- Accessibility across desktop and mobile environments

This ensures that authenticity analysis is not abstract or hidden in backend systems, but visible and actionable within the review process.

We also offer a full API suite for developers and integrators.

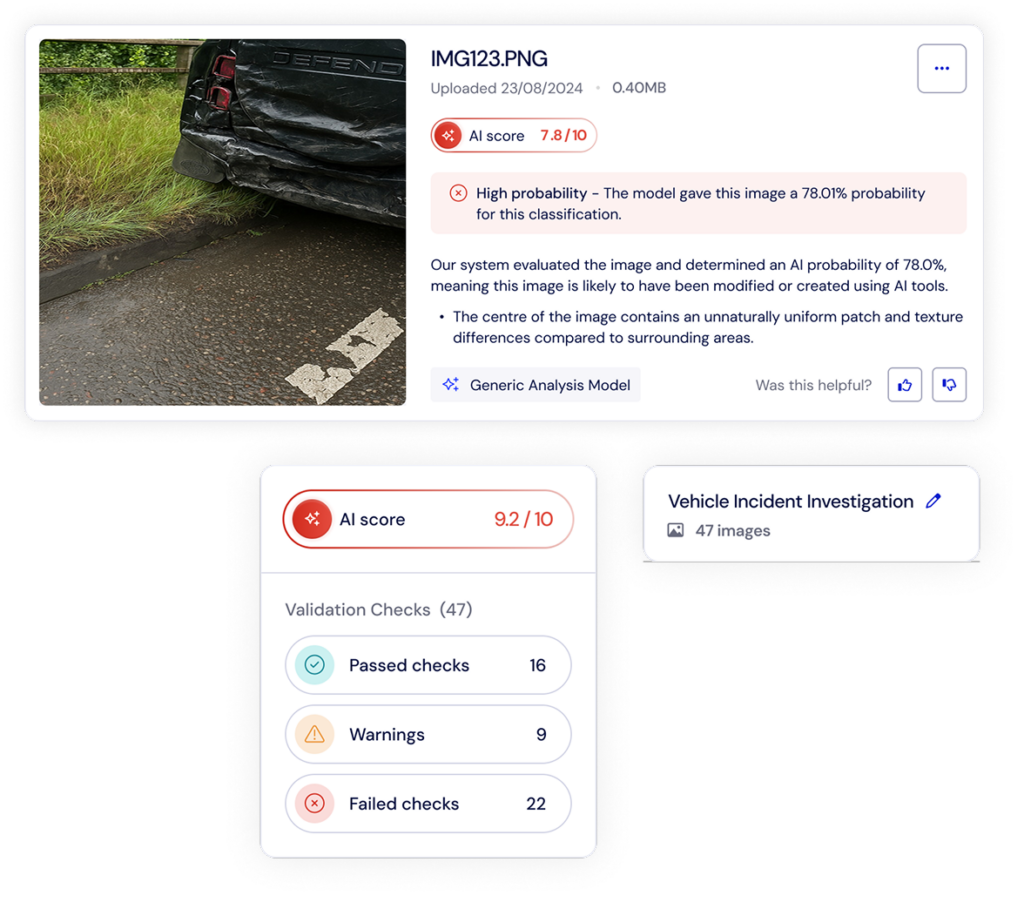

How Humanly Fits Into Existing Claims and Application Workflows

1

Content Classification

A user submits documentation and images as a collection under a project reference. Humanly will automatically classify the correct model to execute based on it’s content understanding.

2

Authenticity Analysis

Humanly analyses the submission for AI-generated or manipulated content indicators across it’s propriety multi-layered approach.

3

Explained Signals

The platform returns structured authenticity outputs for review, explaining its perception on the data. A trained reviewer evaluates the case, using authenticity signals as one input among others. This model reinforces human oversight while introducing analytical depth that manual inspection alone may struggle to maintain at scale.